Stable Diffusion is now available as a Keras implementation (republished with mem.ai auto-generated insert below)

Francois Chollet had a recent tweet where he announced that Stable Diffusion is now available as a Keras implementation, thanks to @divamgupta!

My takeaways from reading the announcement and its thread:

Porting Stable Diffusion from PyTorch to TensorFlow might yield some performance advantage due to the XLA compile option.

Francois Chollet encourages us to read throughout the code as it is brief.

That is nice, but I did not expect what I found next. I came across a user that responded to the tweet announcement with a “@memdotai mem it” message.

This message triggered an extraordinary outcome creating a one-page summary including:

- An AI-generated summary

- Twitter threads

- Embedded code with explanations.

It also shows recommendations for other relevant Twitter threads.

I like how seamlessly mem.ai went through the threads, extracting critical information and neatly formatting the content.

Enjoy!

https://twitter.com/fchollet/status/1574782176633430017

🪄Smart summary:

The thread discusses the Stable Diffusion model, which is now available in KerasCV. The model is 30% faster than the PyTorch version, and the image generation loop is only ~100 lines.

-——

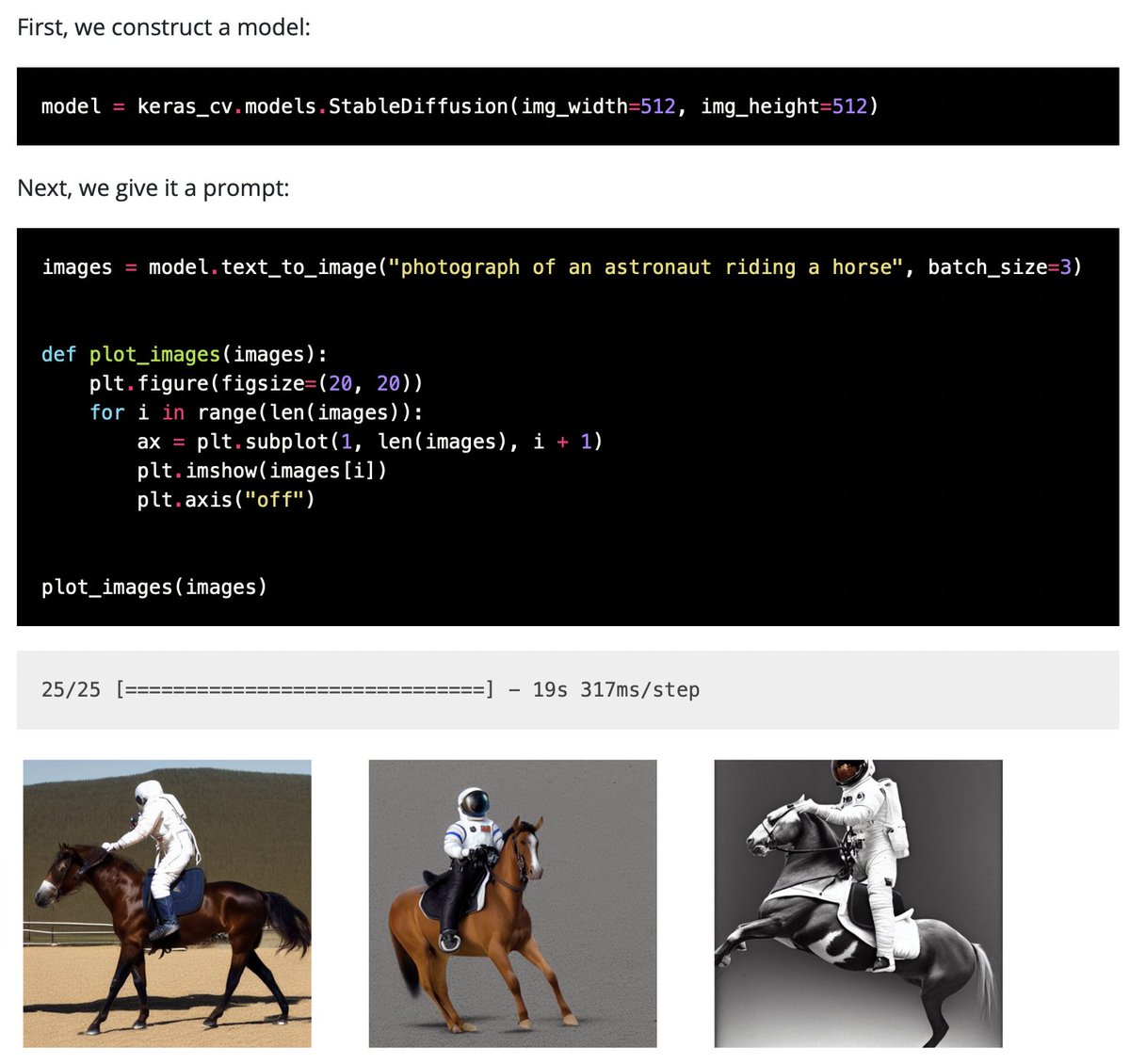

Stable Diffusion is now available directly in KerasCV!

And it’s fast: 30% faster than the PyTorch version for a batch of 3 images on the NVIDIA T4 GPU (which is the GPU you typically get on Colab).

Try it out: https://t.co/a7lFP8kIBv

If you want to learn more about how Stable Diffusion works, I encourage you to check out the implementation. The model itself is only ~350 lines across 3 files. The image generation loop is ~100 lines.

It gives you a good idea of what it’s like to work with Keras.

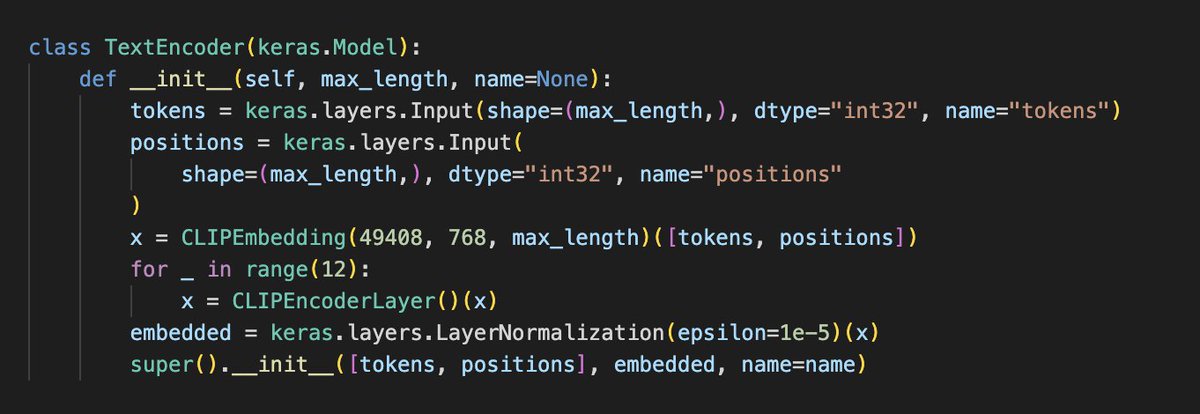

This is the text encoder (and its subcomponents): 87 LOC

https://t.co/Ia8dioghJR

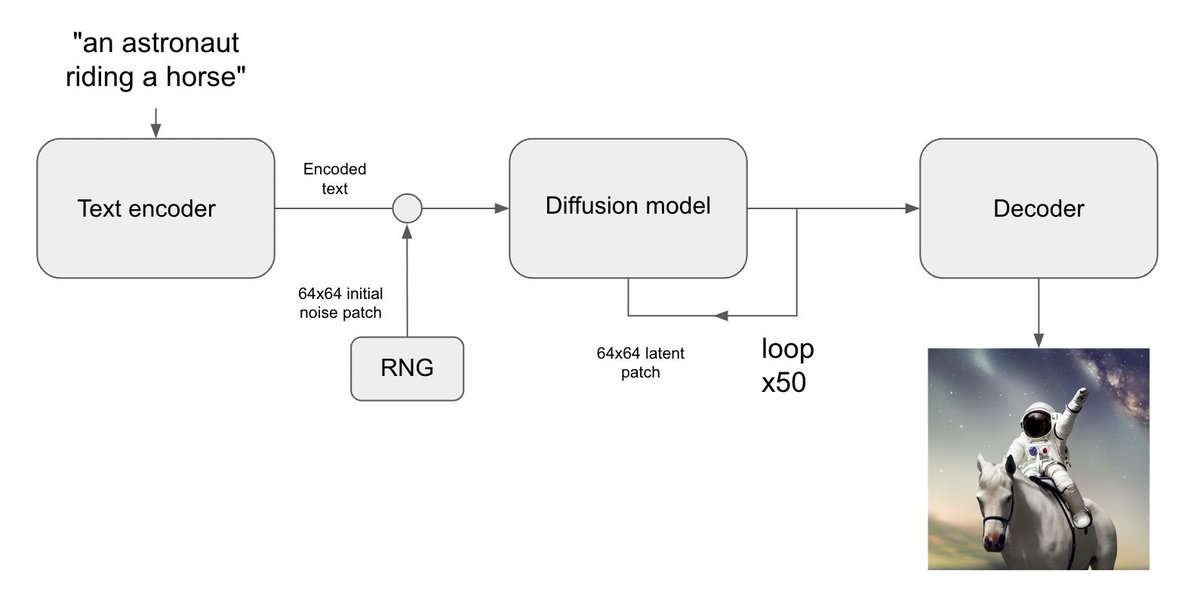

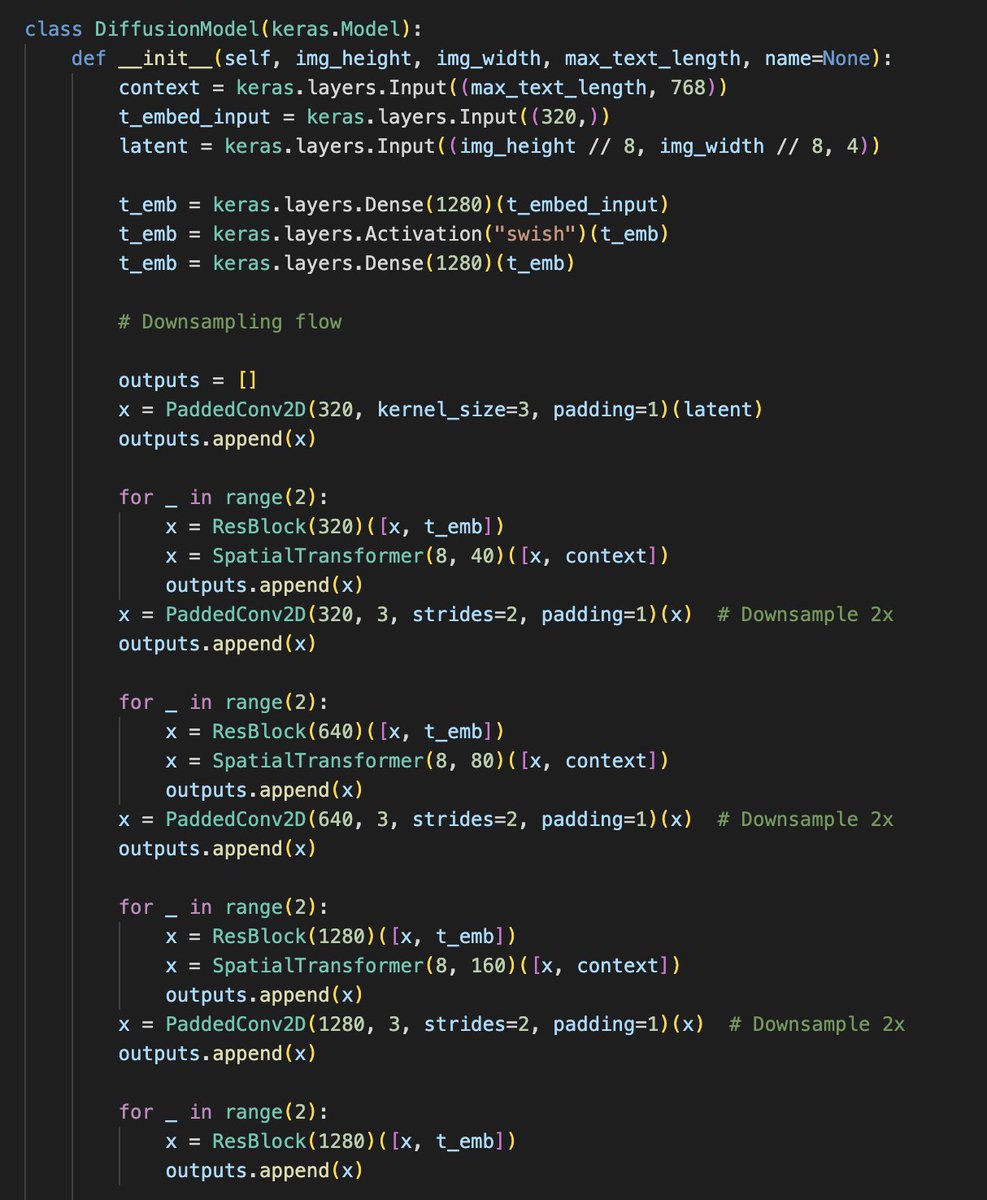

This is the Diffusion UNet. A bit more hefty: 181 LOC in total. https://t.co/P4AJaF31vj

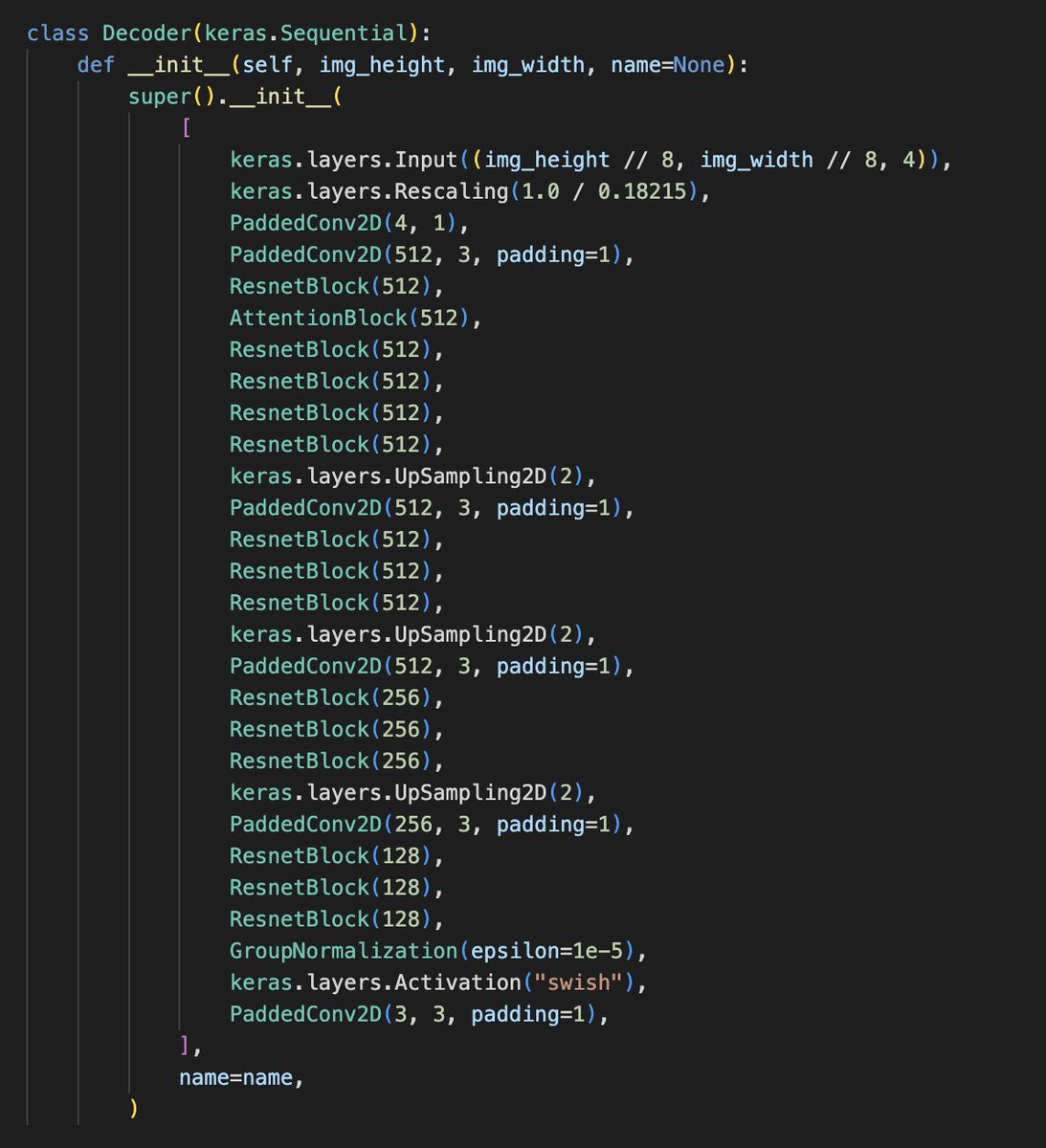

This is the final image decoder model. 86 LOC. https://t.co/fhoV9tVvNK

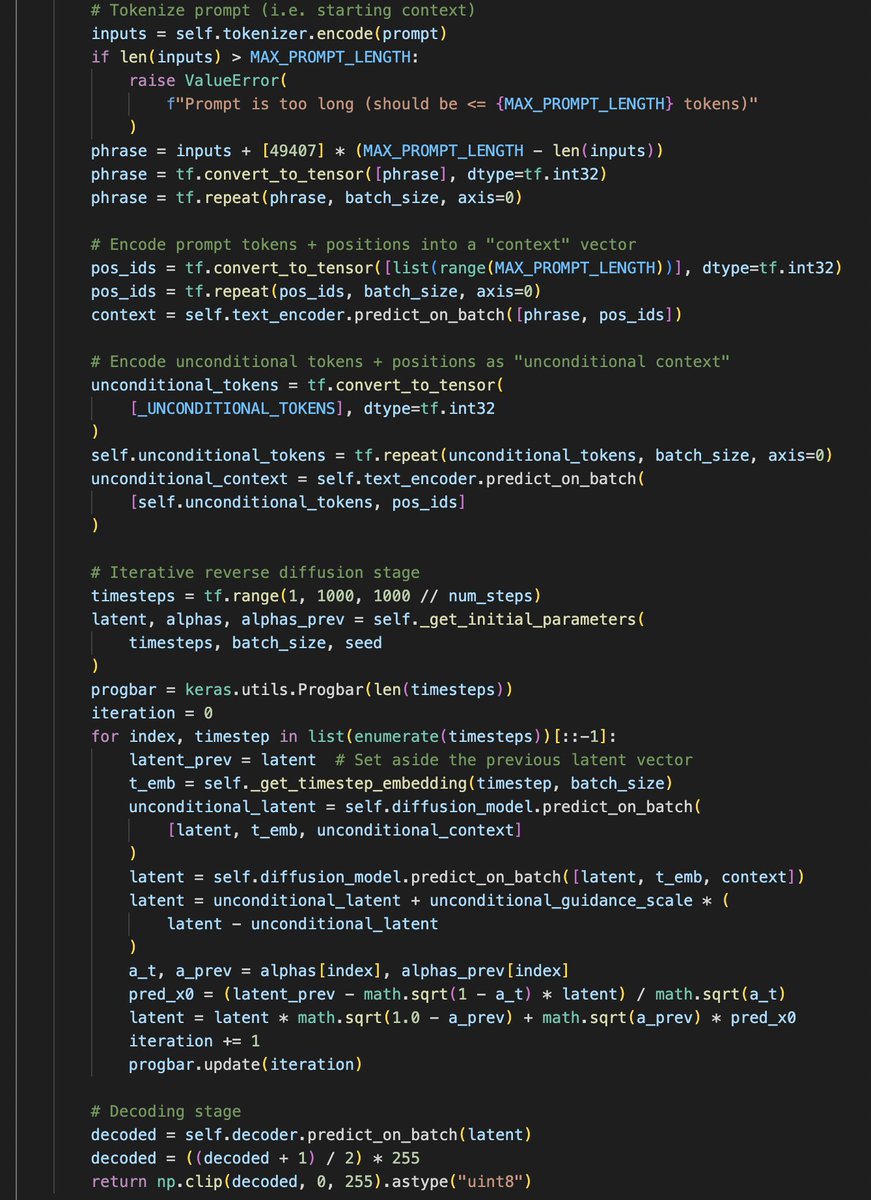

And finally, the image generation loop. https://t.co/eQ1lUgbpfR

Many thanks to all those who made this implementation possible, in particular @divamgupta @luke_wood_ml and of course the creators of the original Stable Diffusion models!